by John Walker

Once you have a data network which interconnects computers, there is scarcely anything more obvious to do with it than download and upload files—take data there and copy it to here and vice versa. It's not surprising, then, that one of the first protocols defined in the infancy of the ARPANET, ancestor of the Internet, was the original File Transfer Protocol, promulgated in RFC 114 on April 16th, 1971, later updated in RFC 765 and RFC 959. More than thirty-five years later, downloading (and to a lesser extent uploading) files remains one of the essential applications on the now-global Internet; users require a way to reliably transfer files among hosts on the network, regardless of their size, content, and the details of the machines at either end of the network connection.

With the advent of the World-Wide Web, its HTTP (Hypertext Transfer Protocol) has become the most commonly used means of downloading files as well as viewing Web pages, although virtually all Web browsers (but few Web servers) also support the FTP protocol. Since its inception in 1994, Fourmilab has made all of its content available through both HTTP and FTP. While most people today download files with their Web browser using HTTP, modern FTP clients provide some advantages over the HTTP download implementation of many popular browsers; in particular, interrupted downloads may be resumed from the point of interruption, without the need to re-transfer the portion of the file already received.

The companion to this document discusses, in detail, the most common source of complaints to Web site managers that files downloaded from their servers are “corrupted”. As is usually the case these days when corruption is discussed in connection with computers, the cause is not at the Web site or on the server, but in Redmond, Washington, where Microsoft produce their laughably unreliable, insecure, and standards uncompliant attempt at a Web browser called “Internet Explorer” by those who make it, and “Exploder” (among a multitude of even less complimentary epithets) by Web developers forced to cope with its eccentricities thanks to the regrettable market share it has attained. I sometimes wonder if Microsoft deliberately made its icon a blue “e” because clicking it is so likely to make your computer go Blooie!

Relevant to the topic here, and in short, the problem is that lengthy downloads with Internet Explorer have a disturbing tendency to end up short: lopped off somewhere in the middle, with no indication whatsoever to the user or the Web site administrator that anything whatsoever is amiss. The user who downloaded the file usually becomes aware of the problem when attempting to extract files from the downloaded archive, at which point the extract utility will report the download as “corrupt” or “damaged”. If this weren't bad enough, Internet Explorer compounds the problem by storing a copy of the incomplete file in its browser cache (which Microsoft prefers to call “Temporary Internet files”, insisting, like the old bad IBM of the 1950s and 60s, on having their own name for everything—always one different from the rest of the industry). Unless the user wipes out these files, subsequent attempts to download the file in the hope it may be received correctly are foredoomed, since Explorer will simply deliver a copy of the original truncated download from the cache, again providing the user no indication that it is doing so.

Now, the real solution to this problem (and many, many others) is, as discussed in the companion document, to abandon Internet Explorer in favour of a competently implemented Web browser from another supplier. But some users may not wish, or in centrally managed corporate environments not be permitted, to install non-Microsoft software on their machines. (Given the risks of spyware to behind-the-firewall corporate networks, this is a legitimate concern, although one must note that Internet Explorer has been the principal vector for the infection of computers with such malevolent software for the better part of a decade.)

FTP, at first glance, appears to be an attractive alternative in such situations, at least for downloads which are available with that protocol. Fourmilab is unusual in making all of its content available with FTP as well as HTTP, but many other sites, particularly in the free software world, provide an FTP option for large downloads. Every copy of Microsoft Windows since Windows 95 has shipped with a command-line FTP client pre-installed, so this seems, at first glance, an ideal (albeit somewhat clunky) solution—an application factory-installed on every Windows machine which can reliably download large files without ever using Internet Explorer.

But this is Microsoft we're discussing, so, as usual, there is A Catch. In this case it is the command-line FTP client that Microsoft furnishes with Windows. This is a piece of software which belongs in an ancient history museum, not on your hard drive. When the FTP protocol was originally designed, all of the machines on which it was used were directly connected to the then-ARPANET, with permanently assigned network addresses. Innovations such as Network Address Translation (NAT) and firewalls, which would be introduced in the 1990s to cope with the massive public pile-on to what had by then matured into the Internet and the resulting address space shortage and need to protect against attacks by malicious individuals and software, were decades in the future when FTP was designed. Consequently, the original design of FTP is not compatible with many NAT devices (for example, DSL and cable modems), and firewalls. Some devices provide compatibility with the original FTP protocol by carefully inspecting network traffic, but you can't assume a typical network connection, especially one aimed at home users accessing the Web, will work with original FTP.

Fortunately, there is a work-around, called “passive mode” FTP, which has been part of the FTP standard ever since RFC 765 dating from June 1980, more than five years before the initial release of Microsoft Windows. Nevertheless, more than a quarter of a century later, the Microsoft Windows FTP client still does not support passive mode transfers. (Microsoft people may tell you that their FTP client does support passive mode FTP, and that “all you have to do is enter the command ‘quote PASV’ before starting the transfer”. This is untrue. This command, which simply sends a “PASV” command to the remote server will, indeed, put the server into passive mode, and you'll receive a nice confirmation of this event. But Microsoft's FTP client still doesn't understand passive mode, so it will attempt to make the file transfer in active mode, which won't work.)

The upshot of all of this is that whether the Microsoft FTP client will work for you depends upon the details of your connection to the Internet. If you have a true direct connection or your interface has special support for active mode FTP, then the instructions below will work, but if your connection requires passive mode, you're out of luck, and you won't be able to use Microsoft's FTP client to download files. If you are able to install software on your machine, you have the option of installing NcFTP, a free FTP client which runs on a wide variety of machines including Microsoft Windows and supports passive mode as well as numerous innovations over the decades which Microsoft do not see fit to make available to their clientele. Another alternative is SmartFTP, which includes a nice graphical user interface; it is not free software, but may be used for non-commercial purposes without payment.

Every document on the Web, including every file you download, is identified by a Uniform Resource Locator or URL. (Such an item is more properly deemed a Uniform Resource Identifier or URI, as defined in RFC 1630, but when used for Web addresses, almost everybody calls them URLs, and I'm not going to confuse things by insisting on the term URI here.) This is the Web address which appears in the Address box at the top of your browser's window, for example:

http://www.fourmilab.ch/homeplanet/download/3.3a/hp3full.zip

This URL specifies the “Full” edition of Home Planet, a 14 megabyte ZIPped archive which we'll use as the example for a large download in this document. Most browsers will display the complete URL for a link in the status line at the bottom of the browser window when you move the cursor above the link, and allow you to copy the URL to the clipboard by right clicking on the link and choosing a menu item like “Copy Shortcut” or “Copy Link Location”.

Make a note of the URL you wish to download, and break it into the following pieces:

Finally, rewrite the Directory piece with “/web” prefixed to the start, yielding “/web/homeplanet/download/3.3a/” in this case. Now we're ready to download the file.

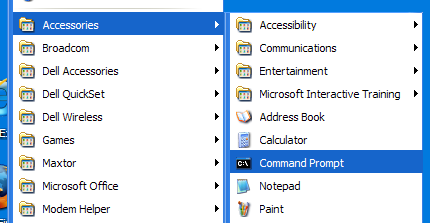

Begin by launching a “Command Prompt” window (that's what it's called on Windows XP; on older versions of Windows it is called an “MS-DOS Window” but it's the same thing). If you don't have a shortcut to this on your desktop, you can find it under “Accessories” from the “All Programs” menu of the Start button. If you're going to be doing this a lot, you may wish to right click on the command prompt item and create a desktop shortcut to it. Having a command prompt shortcut on your desktop marks you, among cognoscenti, as somewhat of an expert, so it's of value purely for snob appeal.

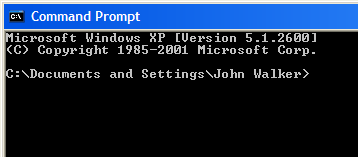

The command prompt window will open and show a prompt which indicates the current directory (folder) in which you are working. On Windows XP, this will be your principal user directory within “Documents and Settings”. On older versions of Windows, you may find yourself in a different directory, including, in Microsoft's inimitable style, C:\WINDOWS, the most dangerous conceivable default. If this is the case, navigate to somewhere safe to download your files. In the following paragraphs, I'm going to describe things you type in and responses you will get from the computer. Items you type will be shown underlined in monospace bold type, while responses you receive will be in monospace normal type. They will not, of course, be so distinguished in the command prompt window.

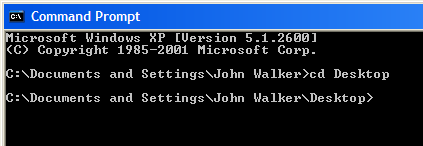

The next step is to specify the directory (or folder) into which the downloaded file will be placed. The simplest option is to choose the “Desktop” directory, which will cause it to appear directly on your desktop where it's easy to find and open when the download is complete. You select the destination directory by entering a “change directory” (CD) command, specifying the directory name.

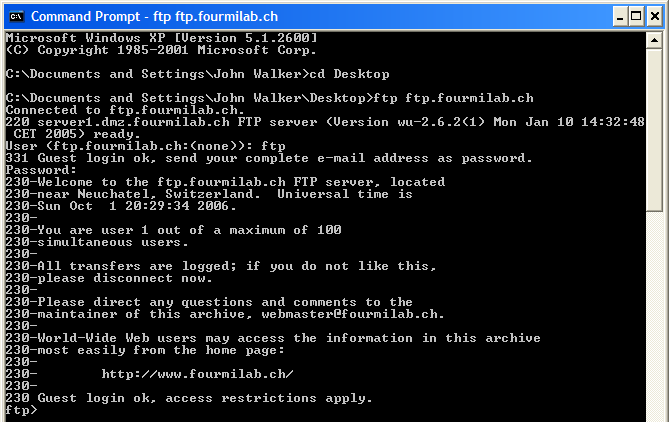

Once the destination directory has been selected, you can launch the FTP (File Transfer Protocol) application which will be used to download the file. This command line utility has been included as a standard part of all releases of Windows starting with Windows 95. Enter the FTP command at the prompt, following it with the name of site to which you wish to connect, for Fourmilab this is ftp.fourmilab.ch. (Actually, you can specify www.fourmilab.ch or just fourmilab.ch because these are all actually the same site, but this is not necessarily the case for sites other than Fourmilab.)

The FTP program will attempt to connect to the site you specified, and if all is well you will then be asked to log in. File downloads use an anonymous login—you do not need to have an account or special permission. When asked for your user name, just enter “ftp” (you can also specify “anonymous” if you prefer, but that isn't as easy to spell and type). For anonymous or guest logins, you are asked to enter your E-mail address as a password. In fact, you can enter anything at all; the Fourmilab server will complain if what you enter doesn't look like an E-mail address, but it will allow you to log in anyway.

The server will then display a welcome message and issue its “ftp>” invitation for you to enter a command. Skipping the lengthy welcome message, the login process can be summarised as follows:

If your internet connection requires “passive mode” FTP, you would enter “passive” immediately after receiving the “ftp>” prompt. But, as noted above, Microsoft's FTP client does not support this command. I mention it here in case you are using a competently-implemented client program which does.

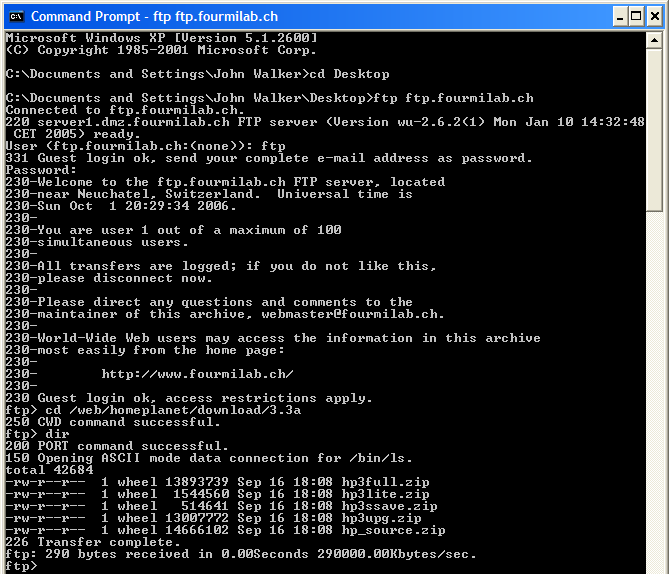

Remember back where we broke up and rewrote the URL? Now it's time to use that information. The FTP program, like the Windows command prompt, has its own “change directory” command, but in this case it specifies the source directory: the location on the remote server from which you will download the file. This is the directory name you rewrote with “/web” at the start. Enter this after the “cd” command at the “ftp>” prompt. After doing so, you may want to enter a “dir” command to list the files in the directory to be double sure you're in the right place.

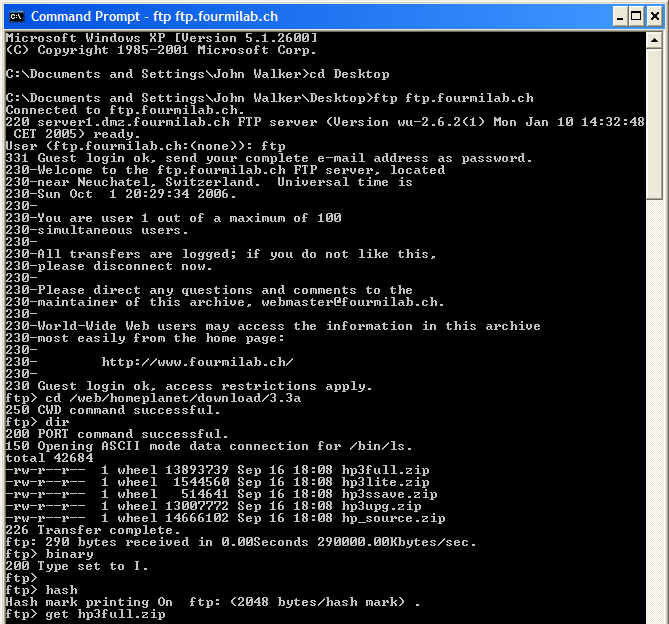

At last, we're ready to actually download the file. Due to decisions, mostly regrettable, made back in the bronze age of computing, FTP can download files in two different modes: text and binary. Even more regrettably, the default in Microsoft's FTP program (and to be fair, those of most other vendors) is text mode which, if used when downloading binary files such as zipped archives, images, or audio files, will cause them to be utterly corrupted. It is therefore absolutely essential that you select binary mode before downloading the file; this is accomplished straightforwardly enough by entering the “binary” command.

By default, FTP does not give any indication of progress during the download. If you're downloading a large file, particularly on a slow Internet connection, you won't normally have any indication the download is proceeding before you either see the message that indicates it has completed or an error message telling you it failed. Specifying the “hash” command before starting the download enables a crude form of process indicator which prints an octothorpe character (“#”, sometimes called a “hash mark”, hence the command name) at periodic intervals during the data transfer. It's ugly, but I generally find it preferable to not knowing if the transfer has hung up for some reason, and I recommend this command for most users.

There's nothing left but to start the download, which is accomplished with the “get” command, specifying the File name extracted from the URL. Here is the sequence of commands that begins a file transfer:

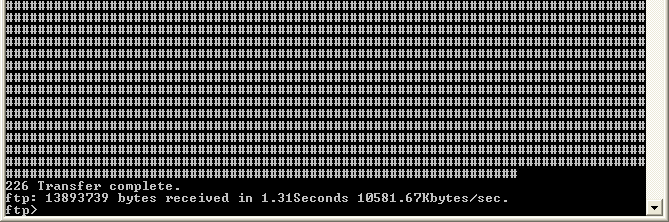

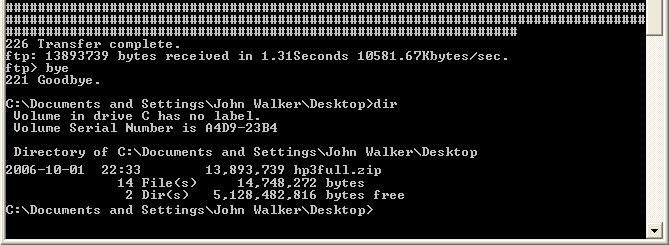

If you specified the “hash” command, you will now see a sequence of octothorpe characters appear on the screen as the file is transferred. If all goes well, when the entire download has been received, a:

message will appear, followed by a line which specifies the number of bytes transferred and how long the transfer took.

You may now quit the FTP program by entering the “bye” command, which will return you to the Windows command prompt. You may then wish to use the “dir” command to verify the presence of the file you've just downloaded and confirm that its length agrees with the length of the file on the server (from the FTP “dir” command and the length of the download displayed after the “Transfer complete” message.

With the download complete, you're done with the command prompt window and may close it by entering the:

command. If you downloaded the file to your Desktop, you should now see the file displayed there, whence you can open it, drag it to another destination, or do whatever you wish with it.

The FTP process may seem fussy, cumbersome, and antiquated. Frankly, that's because it is all of those things, but give it a break—this protocol is thirty-five years old, and was created at a time when the backbone of the ARPANET ran about as fast as a dial-up modem today. There are numerous fancier and far more friendly FTP utilities available, for example SmartFTP (which is free for non-commercial use), but the command line FTP procedure described here, however primitive, doesn't require you to install anything.

Even with a protocol as robust as FTP, occasionally “bit happens”: your Internet connection may drop out, the site from which you're downloading may go down, or you may accidentally close the window in which the download is running. Modern FTP clients support the “reget” command which, if issued instead of the “get” in the example above, will resume the download from the point of interruption without the need to transfer the portion of the file you've already received. Naturally, Microsoft's slime-dwelling pre-Cambrian FTP client does not implement this refinement, which would improve the fault tolerance of the system and the experience of the user; it seems that in Microsoft's estimation the customer experience must be uniformly brutalising and depressing, with certainty of shoddiness replacing that of entitlement to excellence which would create expectations of the vendor which Microsoft prefers to avoid.

The cookbook approach to FTP downloading of files from links on the Web is specific to Fourmilab in that, unlike the majority of present-day Web sites, every file at Fourmilab may be downloaded either with the usual HTTP protocol used by Web browsers or via FTP by the URL rewriting trick described above. Most other sites which provide FTP access do so with specific URLs dedicated to FTP, sometimes on different machines than their Web servers. You'll have to explore such sites with your Web browser to find their FTP links.

|

|

This document is in the public domain.