« July 2009 | Main | September 2009 »

Monday, August 31, 2009

Reading List: Theo Gray's Mad Science

- Gray, Theodore. Theo Gray's Mad Science. New York: Black Dog & Leventhal Publishers, 2009. ISBN 978-1-57912-791-6.

- Regular visitors here will recall that from time to time I enjoy mocking the fanatically risk-averse “safetyland” culture which has gripped the Western world over the last several decades. Pendulums do, however, have a way of swinging back, and there are a few signs that sanity (or, more accurately, entertaining insanity) may be starting to make a comeback. We've seen The Dangerous Book for Boys and the book I dubbed The Dangerous Book for Adults, but—Jeez Louise—look at what we have here! This is really the The Shudderingly Hazardous Book for Crazy People. A total of fifty-four experiments (all summarised on the book's Web site) range from heating a hot tub with quicklime and water, exploding bubbles filled with a mixture of hydrogen and oxygen, making your own iron from magnetite sand with thermite, turning a Snickers bar into rocket fuel, and salting popcorn by bubbling chlorine gas through a pot of molten sodium (it ends badly). The book is subtitled “Experiments You Can Do at Home—But Probably Shouldn't”, and for many of them that's excellent advice, but they're still a great deal of fun to experience vicariously. I definitely want to try the ice cream recipe which makes a complete batch in thirty seconds flat with the aid of liquid nitrogen. The book is beautifully illustrated and gives the properties of the substances involved in the experiments. Readers should be aware that as the author prominently notes at the outset, the descriptions of many of the riskier experiments do not provide all the information you'd need to perform them safely—you shouldn't even consider trying them yourself unless you're familiar with the materials involved and experienced in the precautions required when working with them.

Thursday, August 27, 2009

Gnome-o-gram: Adjustable Rate Mortgages, Notional Value, and the Double Dip

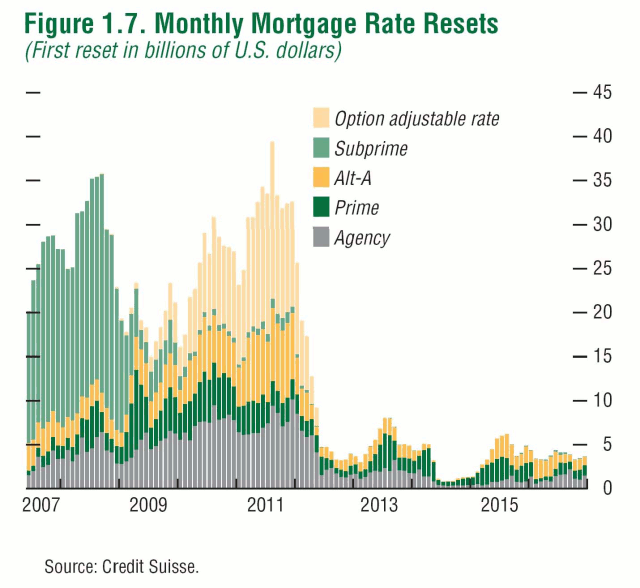

Financial markets have recovered since their March 2009 lows, and the sense of crisis and impending doom seems to have abated somewhat, although many worry about the ultimate consequences of the enormous injections of debt-financed liquidity done by the U.S. government, and forecasts for deficits and accumulation of debt which are unprecedented in the human experience. So, is it time to let out a sigh of relief, think about getting back into the markets, and devote less time and resources to planning for a worst case scenario? I don't think so; here's why. You'll recall that the proximate cause of the current financial crisis was the meltdown of “subprime” adjustable rate mortgages (ARMs) the borrowers could not possibly afford to pay when their initially low-ball interest rates “reset” to market rates. Borrowers who took out these loans were betting that by the time the loan reset, the value of the house would have appreciated sufficiently so that they'd have enough positive equity to qualify for a loan with more affordable terms with which they'd refinance and retire the ARM. This worked out just fine as long as the real estate bubble continued to inflate (indeed, along with other ill-considered policies encouraged by the U.S. government, it was one of the main reasons for the residential real estate bubble in the first place). Once property values began to level off and started to fall, however, these ARMs were a disaster. A “homeowner” with a loan for more than the property is worth isn't going to be able to refinance, and not being able to make the monthly payments once the interest resets, will have no option but to default and allow the property to be foreclosed. Even if the borrower is able to make the payments, there's still a strong incentive to default anyway rather than pay on a loan that's worth more than the house. There's even a name for ARM borrowers mailing the keys to the house back to the bank: “jingle mail”. The second factor which contributed to the crisis was that these mortgages were not, as in times of old, held by the bank that issued them as assets and serviced by the bank, but rather packaged up with many other mortgages and immediately resold as “mortgage-backed securities”, often of bewildering complexity, involving over-the-counter (OTC) financial derivative contracts which combined aspects of insurance, options, and futures. The mortgage-backed securities, in turn, were sold to financial institutions all around the world, which treated them, even if based upon what amounted to junk mortgages, as highly-rated debt instruments on the basis of the insurance against default provided by the derivative contracts (especially “credit default swaps”). Now you can see how the smash-up happened. The subprime ARMs, as a class, were headed to default. This violated the fundamental assumption of insurance: that pooling individual risks reduces the risks in the aggregate. When an entire asset category melts down, however, it's like an insurance company which has written homeowner policies on hundreds of thousands of houses in a city, and then an earthquake levels the entire city. In this case, the issuers of these contracts, including AIG, were liable to investors when the mortgages underlying the securities defaulted, and would have been unable to pay the claims. This, then, would have required a drastic (and almost impossible to calculate) mark-down of the value of the mortgage-backed securities, whose collapse in value may have rendered the financial institutions that owned them insolvent, setting off a chain-reaction collapse on a global scale. The principal reason for the AIG bailout was to keep this “counterparty risk” from setting the whole cataclysm into motion. Well, here we are coming up on a year after the wheels almost came off the global financial system in September 2008, and after the bailouts, nationalisations, forced mergers, “stimulus” packages and abundant grandstanding and demagoguery by politicians, the system is still up and running, albeit with a weak economy and increasing unemployment. So, is the worst behind us? Don't count on it. Take a look at the following chart. Note that now we're almost exactly in the trough between the end of the massive resets of subprime ARMs which precipitated the original crisis, and a rapidly approaching wave of resets of other kinds of ARMs which became popular in the wake of the subprime collapse. Note that only the dark green portion of this chart represents “prime” ARMs—everything else is lower in quality. (This chart does not show fixed-rate mortgages—only a small fraction of borrowers who meet prime credit standards opt for ARMs.) Look at the size of the “Option adjustable rate” bulge coming up. These are loans where the borrower can adjust their monthly payment, and in some cases make a payment so small that their equity actually falls. So, starting in around May 2010, and running through October 2011, we have a second wave of ARM resets which is just about as large as the subprime bulge which got us into this mess.

However, the last time we went through an ARM reset we were near the top of the real estate bubble. The next time, unless there's a rapid and dramatic recovery in the residential property market (which is not the way to bet), many of the borrowers in the next wave of resets will be “under water”: their loans are substantially larger than the current resale value of their houses, and therefore have an even greater incentive to go the jingle mail route.

Now let's take a look at how successful financial institutions have been in winding down that mountain of opaque derivative contracts on their books. According to the most recent semiannual OTC derivatives statistics issued

by the Bank for International Settlements (BIS), the total over-the-counter derivatives outstanding (PDF) were, in notional value, US$595 trillion in December 2007, 684 trillion in June 2008, and down to 591 trillion by December 2008. (There are no more recent figures available from the BIS.) So, the derivatives appear to be winding down, but there's a heck of a way to go!

If your head is about to explode trying to get your mind around the concept of US$600 trillion (here's a sense of what a hundredth of that looks like), let me say a few words about the “notional value” of a derivatives contract. Let's consider a very simple financial derivative, the wheat futures contract discussed earlier. Each contract is for the delivery of 5,000 bushels of wheat, and is priced in cents per bushel. The notional value of the contract is that of the underlying asset: the wheat. This contract is currently trading at around US$4.75, so the notional value of the contract is US$23,750. But to buy or sell such a contract, you only have to put up the margin, which is currently just US$2,700, and to maintain the contract you have to maintain a margin of US$2,000. If the trade goes against you and your margin falls below US$2,000 you have to either put up more margin money or be sold out of the contract at a loss. So in this case, while the full value of the asset that underlies the contract is US$23,750, the actual investment at risk is on the order of 10% of this figure. It is this leverage which makes futures markets so exciting (and risky) to trade.

It is, of course, possible to imagine a situation in which you could lose the entire notional value of a derivatives contract. If you bought a wheat contract and, for some reason, the price of wheat went to zero overnight, you'd be on the hook for the entire value of the contract. But there's no plausible scenario in which that could happen, and since exchange-traded futures contracts are settled every day at the close of the market, actual day-to-day fluctuations are of modest size. For an over-the-counter derivative transaction, however, the actual valuation usually only happens at the expiration date, and given the complexity of many of these contracts, it can be extremely difficult to place a value on them (or, in the term of art, “mark to market”) prior to the settlement date. Further, it is entirely plausible that, say, an insurance contract covering a pool of subprime ARMs could go all the way to the full principal value of the mortgages (or something close to it) if that entire class of asset implodes, as more or less happened in recent months. This means that for many kinds of derivatives, while calculating the notional value is simple, estimating the market value of the risk to the counterparties involves subtleties and a great deal of judgement. The BIS goes ahead and tries anyway (in large part relying upon those who report data to them), and shows gross market values of outstanding OTC derivatives as US$16 trillion in December 2007, 20 trillion in June 2008, and 34 trillion in December 2008. So (to the extent you trust these figures), while the notional value has peaked and is going down, the market value (the estimation of the risk to the parties of the contract) has increased steadily over time. It's tempting to attribute this to a rising risk premium for these instruments, but it's difficult to draw conclusions from numbers which aggregate a multitude of very different securities.

In conclusion, it looks like that in 2010 and 2011 the financial system may face a shock due to defaults on ARMs comparable in size to that of 2007–2008, and the total magnitude of outstanding derivative contracts (mortgage-based and other) remains enormous, whether measured by notional value (which obviously overstates the risk, but by how much?) or estimated market value (which depends upon many assumptions of dubious merit, especially in a crisis). The possibility of a house of cards collapse is still very much on the table, and should one begin, the flexibility of governments to avert it will have been reduced by the enormous debt they've run up trying to paper over the first wave of the collapse. The prudent investor, while earnestly hoping nothing like this comes to pass, should bear in mind that it may, and take appropriate precautions.

Note that now we're almost exactly in the trough between the end of the massive resets of subprime ARMs which precipitated the original crisis, and a rapidly approaching wave of resets of other kinds of ARMs which became popular in the wake of the subprime collapse. Note that only the dark green portion of this chart represents “prime” ARMs—everything else is lower in quality. (This chart does not show fixed-rate mortgages—only a small fraction of borrowers who meet prime credit standards opt for ARMs.) Look at the size of the “Option adjustable rate” bulge coming up. These are loans where the borrower can adjust their monthly payment, and in some cases make a payment so small that their equity actually falls. So, starting in around May 2010, and running through October 2011, we have a second wave of ARM resets which is just about as large as the subprime bulge which got us into this mess.

However, the last time we went through an ARM reset we were near the top of the real estate bubble. The next time, unless there's a rapid and dramatic recovery in the residential property market (which is not the way to bet), many of the borrowers in the next wave of resets will be “under water”: their loans are substantially larger than the current resale value of their houses, and therefore have an even greater incentive to go the jingle mail route.

Now let's take a look at how successful financial institutions have been in winding down that mountain of opaque derivative contracts on their books. According to the most recent semiannual OTC derivatives statistics issued

by the Bank for International Settlements (BIS), the total over-the-counter derivatives outstanding (PDF) were, in notional value, US$595 trillion in December 2007, 684 trillion in June 2008, and down to 591 trillion by December 2008. (There are no more recent figures available from the BIS.) So, the derivatives appear to be winding down, but there's a heck of a way to go!

If your head is about to explode trying to get your mind around the concept of US$600 trillion (here's a sense of what a hundredth of that looks like), let me say a few words about the “notional value” of a derivatives contract. Let's consider a very simple financial derivative, the wheat futures contract discussed earlier. Each contract is for the delivery of 5,000 bushels of wheat, and is priced in cents per bushel. The notional value of the contract is that of the underlying asset: the wheat. This contract is currently trading at around US$4.75, so the notional value of the contract is US$23,750. But to buy or sell such a contract, you only have to put up the margin, which is currently just US$2,700, and to maintain the contract you have to maintain a margin of US$2,000. If the trade goes against you and your margin falls below US$2,000 you have to either put up more margin money or be sold out of the contract at a loss. So in this case, while the full value of the asset that underlies the contract is US$23,750, the actual investment at risk is on the order of 10% of this figure. It is this leverage which makes futures markets so exciting (and risky) to trade.

It is, of course, possible to imagine a situation in which you could lose the entire notional value of a derivatives contract. If you bought a wheat contract and, for some reason, the price of wheat went to zero overnight, you'd be on the hook for the entire value of the contract. But there's no plausible scenario in which that could happen, and since exchange-traded futures contracts are settled every day at the close of the market, actual day-to-day fluctuations are of modest size. For an over-the-counter derivative transaction, however, the actual valuation usually only happens at the expiration date, and given the complexity of many of these contracts, it can be extremely difficult to place a value on them (or, in the term of art, “mark to market”) prior to the settlement date. Further, it is entirely plausible that, say, an insurance contract covering a pool of subprime ARMs could go all the way to the full principal value of the mortgages (or something close to it) if that entire class of asset implodes, as more or less happened in recent months. This means that for many kinds of derivatives, while calculating the notional value is simple, estimating the market value of the risk to the counterparties involves subtleties and a great deal of judgement. The BIS goes ahead and tries anyway (in large part relying upon those who report data to them), and shows gross market values of outstanding OTC derivatives as US$16 trillion in December 2007, 20 trillion in June 2008, and 34 trillion in December 2008. So (to the extent you trust these figures), while the notional value has peaked and is going down, the market value (the estimation of the risk to the parties of the contract) has increased steadily over time. It's tempting to attribute this to a rising risk premium for these instruments, but it's difficult to draw conclusions from numbers which aggregate a multitude of very different securities.

In conclusion, it looks like that in 2010 and 2011 the financial system may face a shock due to defaults on ARMs comparable in size to that of 2007–2008, and the total magnitude of outstanding derivative contracts (mortgage-based and other) remains enormous, whether measured by notional value (which obviously overstates the risk, but by how much?) or estimated market value (which depends upon many assumptions of dubious merit, especially in a crisis). The possibility of a house of cards collapse is still very much on the table, and should one begin, the flexibility of governments to avert it will have been reduced by the enormous debt they've run up trying to paper over the first wave of the collapse. The prudent investor, while earnestly hoping nothing like this comes to pass, should bear in mind that it may, and take appropriate precautions.

Friday, August 21, 2009

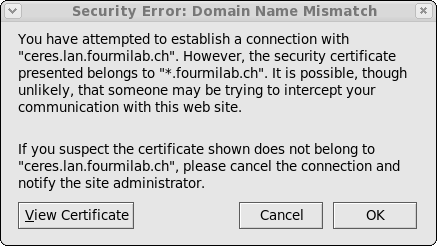

Mozilla Thunderbird "Domain Name Mismatch": Explanation and Work-Around

Today, when I first checked the computer, a popup window informed me that the latest update, version 2.0.0.23, of the Mozilla Thunderbird mail client program had been downloaded and was ready to install. As this was a security update, I went ahead with the installation immediately. After restarting Thunderbird, it immediately popped up the following dialogue: and to my dismay popped it up every time it contacted my in-house, behind the firewall, mail server, either manually or automatically. What appears to have happened is that this security update, which is being deployed across all Mozilla Foundation products, has changed the rules for security certificates generated with wildcards. While a certificate generated for “*.fourmilab.ch” would previously be accepted for a machine with a name such as “ceres.lan.fourmilab.ch” (the mail server), now the warning pops up on every such connection. This is going to strike lots of people who use a common site-wide certificate across all the machines in a server farm, or use a single server to host sites in several different domains.

Fortunately, there is a Thunderbird add-on, “Remember Mismatched Domains”, which adds a check box to the warning dialogue which allows accepting the “mismatch” and not warning further about that specific mismatch. This add-on has already been downloaded more than 125,000 times, and methinks it's about become even more popular in the near future. Just download and install the add-on, accept the domain(s) which are generating the warning, and you're back in business.

and to my dismay popped it up every time it contacted my in-house, behind the firewall, mail server, either manually or automatically. What appears to have happened is that this security update, which is being deployed across all Mozilla Foundation products, has changed the rules for security certificates generated with wildcards. While a certificate generated for “*.fourmilab.ch” would previously be accepted for a machine with a name such as “ceres.lan.fourmilab.ch” (the mail server), now the warning pops up on every such connection. This is going to strike lots of people who use a common site-wide certificate across all the machines in a server farm, or use a single server to host sites in several different domains.

Fortunately, there is a Thunderbird add-on, “Remember Mismatched Domains”, which adds a check box to the warning dialogue which allows accepting the “mismatch” and not warning further about that specific mismatch. This add-on has already been downloaded more than 125,000 times, and methinks it's about become even more popular in the near future. Just download and install the add-on, accept the domain(s) which are generating the warning, and you're back in business.

Sunday, August 16, 2009

It's official! NASA is a jobs program.

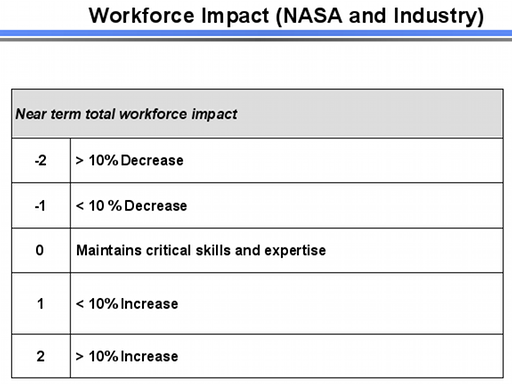

I've been listening to an audio recording of the most recent public meeting of the Review of U.S. Human Space Flight Plans Committee (Augustine Commission), held on August 12th, 2009 in Washington, D.C. This is scheduled to be the last public meeting before the final report is issued, although there is an option for another public session should one prove necessary. In the instant meeting, several “integrated options” (PPT) were presented, then a budget and schedule analysis was done for each under various funding assumptions, and the options ranked according to twelve criteria ranging from crew safety and mission success to expanding human civilisation beyond the Earth. Wanda Austin presented the “Evaluation Measures and Criteria” (PPT) to be used in this ranking. The last of these was titled “Workforce Impact (NASA and Industry)”, slide 16 in the presentation, which appears below (I've cropped and scaled the slide to fit on the page, but nothing other than the slide number has been elided).

Saturday, August 15, 2009

Reading List: The Apollo 11 Moon Landing

- Jenkins, Dennis R. and Jorge R. Frank. The Apollo 11 Moon Landing. North Branch, MN: Specialty Press, 2009. ISBN 978-1-58007-148-2.

- This book, issued to commemorate the 40th anniversary of the Apollo 11 Moon landing, is a gorgeous collection of photographs, including a number of panoramas digitally assembled from photos taken during the mission which appear here for the first time. The images cover all aspects of the mission: the evolution of the Apollo project, crew training, stacking the launcher and spacecraft, voyage to the Moon, surface operations, and return to Earth. The photos have accurate and informative captions, and each chapter includes a concise but comprehensive description of its topic. This is largely a picture book, and almost entirely focused upon the Apollo 11 mission, not the Apollo program as a whole. Unless you are an absolute space nut (guilty as charged), you will almost certainly see pictures here you've never seen before, including Neil Armstrong's brush with death when the Lunar Landing Research Vehicle went all pear shaped and he had to punch out (p. 35). Look at how the ejection seat motor vectored to buy him altitude for the chute to open! Did you know that the iconic image of Buzz Aldrin on the Moon was retouched (or, as we'd say today, PhotoShopped)? No, I'm not talking about a Moon hoax, but just that Neil Armstrong, with his Hasselblad camera and no viewfinder, did what so many photographers do—he cut off Aldrin's head in the picture. NASA public affairs folks “reconstructed” the photo that Armstrong meant to take, but whilst airbrushing the top of the helmet, they forgot to include the OPS VHF antenna which extends from Aldrin's backpack in many other photos taken on the lunar surface. This is a great book, and a worthy commemoration of the achievement of Apollo 11. It, of course, only scratches the surface of the history of the Apollo program, or even the details of Apollo 11 mission, but I don't know an another source which brings together so many images which evoke that singular exploit. The Introduction includes a list of sources for further reading which I was amazed (or maybe not) to discover that all of which I had read.

Wednesday, August 12, 2009

Reading List: Halsey's Typhoon

- Drury, Bob and Tom Clavin. Halsey's Typhoon. New York: Grove Press, 2007. ISBN 978-0-8021-4337-2.

- As Douglas MacArthur's forces struggled to expand the beachhead of their landing on the Philippine island of Mindoro on December 15, 1944, Admiral William “Bull” Halsey's Third Fleet was charged with providing round the clock air cover over Japanese airfields throughout the Philippines, both to protect against strikes being launched against MacArthur's troops and kamikaze attacks on his own fleet, which had been so devastating in the battle for Leyte Gulf three weeks earlier. After supporting the initial landings and providing cover thereafter, Halsey's fleet, especially the destroyers, were low on fuel, and the admiral requested and received permission to withdraw for a rendezvous with an oiler task group to refuel. Unbeknownst to anybody in the chain of command, this decision set the Third Fleet on a direct intercept course with the most violent part of an emerging Pacific (not so much, in this case) typhoon which was appropriately named, in retrospect, Typhoon Cobra. Typhoons in the Pacific are as violent as Atlantic hurricanes, but due to the circumstances of the ocean and atmosphere where they form and grow, are much more compact, which means that in an age prior to weather satellites, there was little warning of the onset of a storm before one found oneself overrun by it. Halsey's orders sent the Third Fleet directly into the bull's eye of the disaster: one ship measured sustained winds of 124 knots (143 miles per hour) and seas in excess of 90 feet. Some ships' logs recorded the barometric pressure as “U”—the barometer had gone off-scale low and the needle was above the “U” in “U. S. Navy”. There are some conditions at sea which ships simply cannot withstand. This was especially the case for Farragut class destroyers, which had been retrofitted with radar and communication antennæ on their masts and a panoply of antisubmarine and gun directing equipment on deck, all of which made them top-heavy, vulnerable to heeling in high winds, and prone to capsize. As the typhoon overtook the fleet, even the “heavies” approached their limits of endurance. On the aircraft carrier USS Monterey, Lt. (j.g.) Jerry Ford was saved from being washed off the deck to a certain death only by luck and his athletic ability. He survived, later to become President of the United States. On the destroyers, the situation was indescribably more dire. The watch on the bridge saw the inclinometer veer back and forth on each roll between 60 and 70 degrees, knowing that a roll beyond 71° might not be recoverable. They surfed up the giant waves and plunged down, with screws turning in mid-air as they crested the giant combers. Shipping water, many lost electrical power due to shorted-out panels, and most lost their radar and communications antennæ, rendering them deaf, dumb, and blind to the rest of the fleet and vulnerable to collisions. The sea took its toll: in all, three destroyers were sunk, a dozen other ships were hors de combat pending repairs, and 146 aircraft were destroyed, all due to weather and sea conditions. A total of 793 U.S. sailors lost their lives, more than twice those killed in the Battle of Midway. This book tells, based largely upon interviews with people who were there, the story of what happens when an invincible fleet encounters impossible seas. There are tales of heroism every few pages, which are especially poignant since so many of the heroes had not yet celebrated their twentieth birthdays, hailed from landlocked states, and had first seen the ocean only months before at the start of this, their first sea duty. After the disaster, the heroism continued, as the crew of the destroyer escort Tabberer, under its reservist commander Henry L. Plage, who disregarded his orders and, after his ship was dismasted and severely damaged, persisted in the search and rescue of survivors from the foundered ships, eventually saving 55 from the ocean. Plage expected to face a court martial, but instead was awarded the Legion of Merit by Halsey, whose orders he ignored. This is an epic story of seamanship, heroism, endurance, and the nigh impossible decisions commanders in wartime have to make based upon the incomplete information they have at the time. You gain an appreciation for how the master of a ship has to balance doing things by the book and improvising in exigent circumstances. One finding of the Court of Inquiry convened to investigate the disaster was that the commanders of the destroyers which were lost may have given too much priority to following pre-existing orders to hold their stations as opposed to the overriding imperative to save the ship. Given how little experience these officers had at sea, this is not surprising. CEOs should always keep in mind this utmost priority: save the ship. Here we have a thoroughly documented historical narrative which is every bit as much a page-turner as the the latest ginned-up thriller. As it happens, one of my high school teachers was a survivor of this storm (on one of the ships which did not go down), and I remember to this day how harrowing it was when he spoke of destroyers “turning turtle”. If accounts like this make you lose sleep, this is not the book for you, but if you want to experience how ordinary people did extraordinary things in impossible circumstances, it's an inspiring narrative.

Friday, August 7, 2009

Reading List: Separation of Power

- Flynn, Vince. Separation of Power. New York: Pocket Books, [2001] 2009. ISBN 978-1-4391-3573-0.

- Golly, these books go down smoothly, and swiftly too! This is the third novel in the Mitch Rapp (warning—the article at this link contains minor spoilers) series. It continues the “story arc” begun in the second novel, The Third Option (June 2009), and picks up just two weeks after the conclusion of that story. While not leaving the reader with a cliffhanger, that book left many things to be resolved, and this novel sorts them out, administering summary justice to the malefactors behind the scenes. The subject matter seems drawn from current and recent headlines: North Korean nukes, “shock and awe” air strikes in Iraq, special forces missions to search for Saddam's weapons of mass destruction, and intrigue in the Middle East. What makes this exceptional is that this book was originally published in 2001—before! It holds up very well when read eight years later although, of course, subsequent events sadly didn't go the way the story envisaged. There are a few goofs: copy editors relying on the spelling checker instead of close proofing allowed a couple of places where “sight” appeared where “site” was intended, and a few other homonym flubs. I'm also extremely dubious that weapons with the properties described would have been considered operational without having been tested. And the premise of the final raid seems a little more like a video game than the circumstances of the first two novels. As one who learnt a foreign language in adulthood, I can testify that it is extraordinarily difficult to speak without an obvious accent. Is it plausible that Mitch can impersonate a figure that the top-tier security troops guarding the bunker have seen on television many times? Still, the story works, and it's a page turner. The character of Mitch Rapp continues to darken in this novel. He becomes ever more explicitly an assassin, and notwithstanding however much his targets “need killin'”, it's unsettling to think of agents of coercive government sent to eliminate people those in power deem inconvenient, and difficult to consider the eliminator a hero. But that isn't going to keep me from reading the next in the series in a month or two. See my comments on the first installment for additional details about the series and a link to an interview with the author.

Penumbral Lunar Eclipse Imaged

Click image to enlarge.

One of the most subtle phenomena in naked eye astronomy is a penumbral lunar eclipse: the Moon does not pass into the region of the Earth's shadow where the Sun is entirely obscured by the Earth, and consequently, although sunlight on some or all of the Moon is partly blocked by the Earth, the visual effect is very difficult to perceive, especially with the logarithmic transfer function of human vision and its inability to make absolute intensity comparisons of events. Fortunately, here in the 21st century, although we don't (yet) have ATOMIC SPACE CARS, we do have magnificently linear digital sensors in our cameras, so it seemed entirely plausible to me that I'd be able to capture the ever so slight shading of the Earth's fuzzy shadow on the Moon during the penumbral lunar eclipse of August 6th, 2009. So, I set up my Nikon D300 camera and Nikkor 500 mm catadioptric “mirror lens” (you can see them here in another context) about an hour before the eclipse was to begin to allow the optics to equilibrate to the ambient temperature. Then, just before the start of the eclipse, I shot a number of photos of the Moon with various exposure times (although, from previous experience, I was pretty sure 1/500 second with the fixed f/8 aperture of the lens and highest resolution 200 ISO sensitivity of the camera would yield the best results which, in the event, they did). I selected the best pre- and mid-eclipse images with the same exposure time (this was a bit of a judgement call, since as is often the case in mid-summer the sky remains pretty gunky even in the middle of the night, and seeing was iffy throughout the eclipse; but then, I'm accustomed to that). The raw images from the camera sensor were processed using identical parameters with Adobe Lightroom, then exported as TIFF files which were loaded into Adobe Photoshop. Due to the interval between the taking of the images, there was an apparent rotation of the Moon, which I corrected for (almost) with the Photoshop free transform tool. (Yes, there's a tiny bit of residual rotation—so sue me.) I did not do any contrast stretching or other adjustments to the luminosity transfer function. Within the limits of the camera and the software tools in the workflow, this is what the image plane sensor saw. And it saw the penumbral eclipse! Look at the lower left side of the image above, and you can see the effect of the Earth partially obscuring the Sun painted upon the Moon. Few people have ever perceived this visually—certainly I did not; the Moon's disc was sufficiently blinding both before the eclipse and at its maximum that there was no clue such a subtle eclipse was underway. And yet a digital camera and a modicum of image processing can dig out from the raw pixels raining upon us from the sky what our eyes cannot see. Is this cool, or what?Monday, August 3, 2009

Reading List: As They See 'Em

- Weber, Bruce. As They See 'Em. New York: Scribner, 2009. ISBN 978-0-7432-9411-9.

-

In what other game is a critical dimension of the playing

field determined on the fly, based upon the judgement of a single

person, not subject to contestation or review, and depending upon the

physical characteristics of a player, not to mention (although none

dare discuss it) the preferences of the arbiter? Well, that would be

baseball, where the plate umpire is required to call balls and strikes

(about 160 called in an average major league game, with an additional

127 in which the batter swung at the pitch). A fastball from a major

league pitcher, if right down the centre, takes about 11 milliseconds

to traverse the strike zone, so that's the interval the umpire has,

in the best case, to call the pitch. But big league pitchers almost

never throw pitches over the fat part of the plate for the excellent

reason that almost all hitters who have made it to the Show will knock

such a pitch out of the park. So umpires have to call an endless

series of pitches that graze the corners of the invisible strike zone,

curving, sinking, sliding, whilst making their way to the catcher's glove,

which wily catchers will quickly shift to make outside and inside

pitches appear to be over the plate.

Major league umpiring is one of the most élite occupations

in existence. At present, only sixty-eight people are full-time major

league umpires and typically only one or two replacements are hired per

year. Including minor leagues, there are fewer than 300 professional

umpires working today, and since the inception of major league baseball,

fewer than five hundred people have worked games as full-time umpires.

What's it like to pursue a career where if you do your job perfectly

you're at best invisible, but if you make an error or, even worse,

make a correct call that inflames the passion of the fans of the

team it was made against, you're the subject of vilification and

sometimes worse (what other sport has the equivalent of the

cry from the stands, “Kill the umpire!”)? In this

book, the author, a New York Times journalist, delves

into the world of baseball umpiring, attending one of the two schools

for professional umpires, following nascent umpires in their careers

in the rather sordid circumstances of Single A ball (umpires have to

drive from game to game on their own wheels—they get a mileage

allowance, but that's all; often their accommodations

qualify for my

Sleazy

Motel Roach Hammer Awards).

The author follows would-be umpires through school, the low minors,

AA and AAA ball, and the bigs, all the way to veterans and the

special pressures of the playoffs and the World Series. There are

baseball anecdotes in abundance here: bad calls, high profile games

where the umpire had to decide an impossible call, and the author's

own experience behind the plate at an intersquad game in spring

training where he first experienced the difference between play at

the major league level and everything else—the clock runs

faster. Relativity,

dude—get used to it!

You think you know the rulebook? Fine—a runner is on third

with no outs and the batter has a count of one ball and two strikes.

The runner on third tries to steal home, and whilst sliding across the

plate, is hit by the pitch, which is within the batter's strike

zone. You make the call—50,000 fans and two irritable managers

are waiting for you. What'll it be, ump? You have 150 milliseconds

to make your call before the crowd starts to boo. (The answer is at the end

of these remarks.) Bear in mind before you answer that any

major league umpire gets this right 100% of the time—it's right

there in the

rulebook

in section 6.05 (n).

Believers in “axiomatic baseball” may be dismayed at

some of the discretion documented here by umpires who adjust the

strike zone to “keep the game moving along” (encouraged

by a “pace of game” metric used by their employer to rate

them for advancement). I found the author's deliberately wrong call

in a Little League blowout game (p. 113) reprehensible, but

reasonable people may disagree.

As of January 2009, 289 people have been elected to the

Baseball Hall of Fame.

How many umpires? Exactly

eight—can

you name a single one? Umpires agree that they do their job best when

they are not noticed, but there will be those close calls where

their human judgement and perception make the difference, some of

which may be, even in this age of instant replay, disputed for

decades afterward. As one umpire said of a celebrated contentious call,

“I saw what I saw, and I called what I saw”. The author

concludes:

Baseball, I know, needs people who can not only make snap decisions but live with them, something most people will do only when there's no other choice. Come to think of it, the world in general needs people who accept responsibility so easily and so readily. We should be thankful for them.

Batter up! Answer: The run scores, the batter is called out on strikes, and the ball is dead. Had there been two outs, the third strike would have ended the inning and the run would not have scored (p. 91).